First of all, thanks for your answer. Actually, I’m not sure which footage is genuine and which might be faked - so it’s really a probability game. If I recall correctly, there was a moment when the chin dropped first, followed by what looked like a body cavitation starting at the posterior neck and moving down the back. But again, I can’t confirm the authenticity of those still frames.

In Boolean algebra, we use ‘X’ to represent a “don’t care” condition - but in this case, it’s more accurate to treat it as a “don’t know” state. Maybe it’s best to focus on what we do know and build from there.

Recently I struggle with the sonic boom calculation.

People often rely on the simplified TDoA formula: d/c - d/v.

This is a valid approximation when the microphone is very close to the bullet’s trajectory.

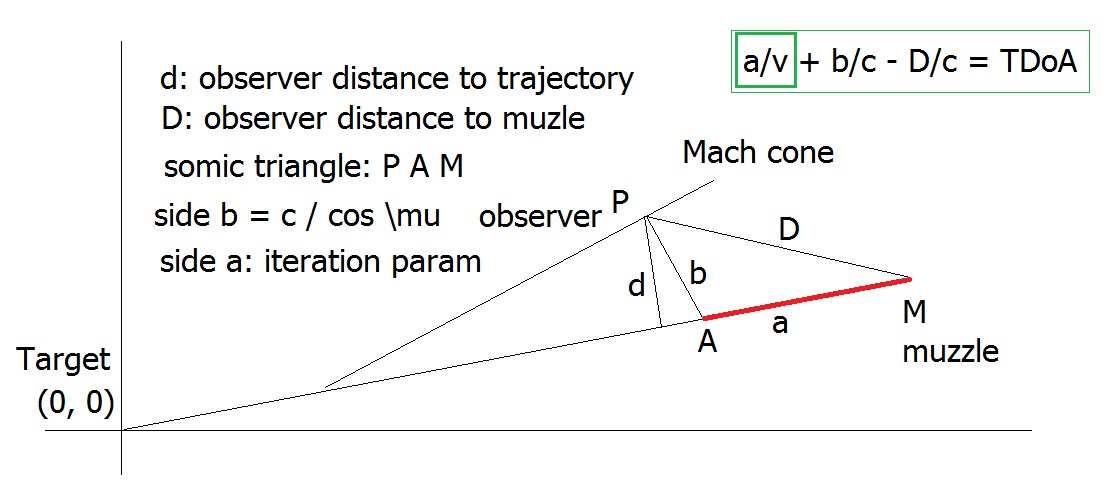

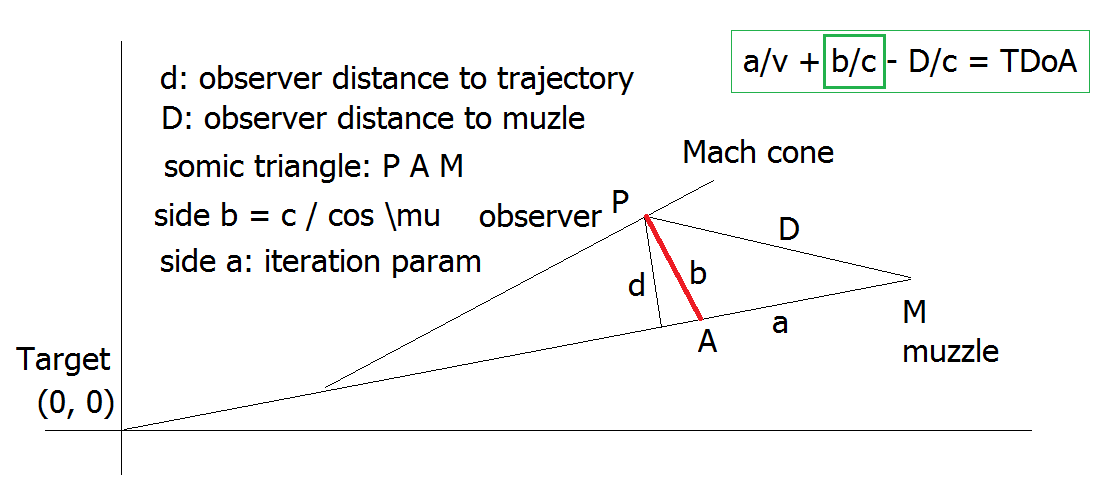

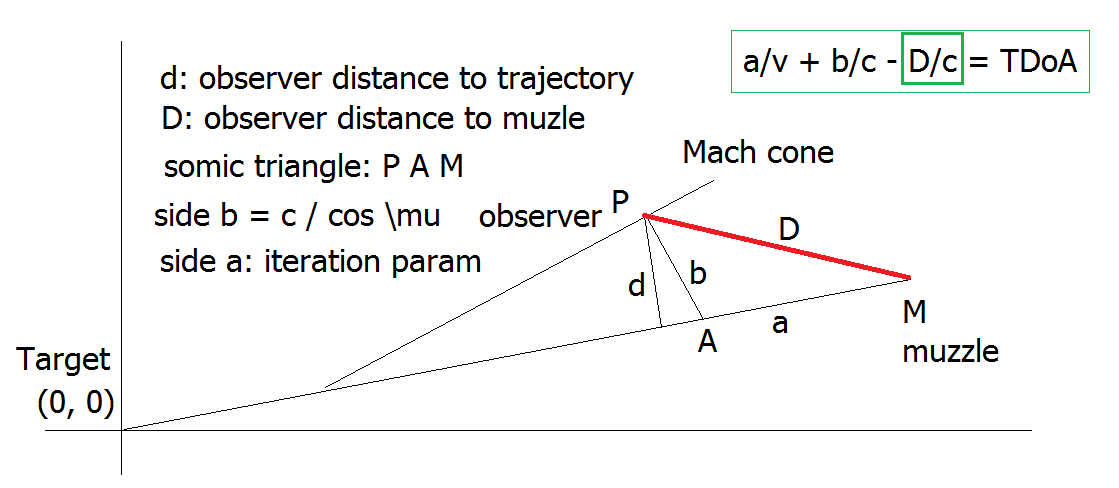

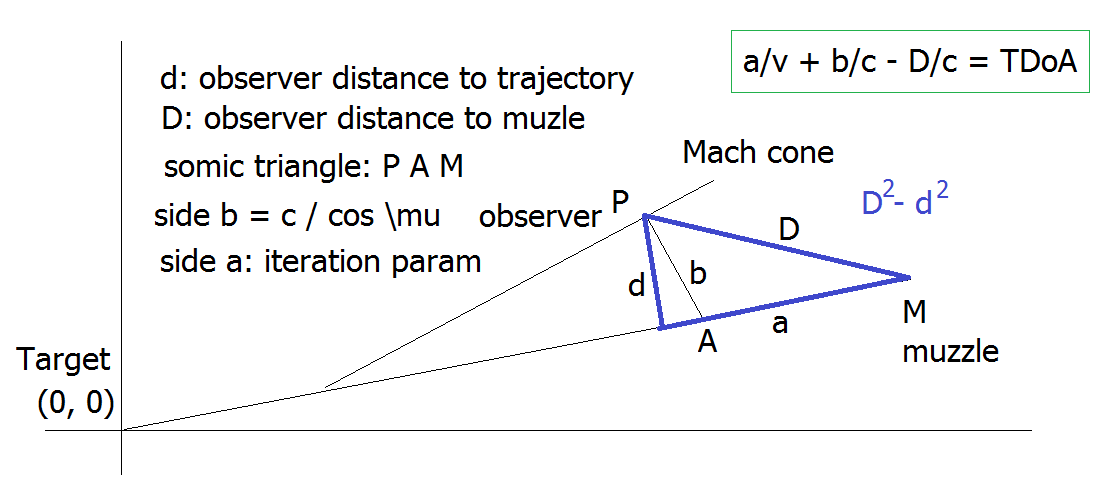

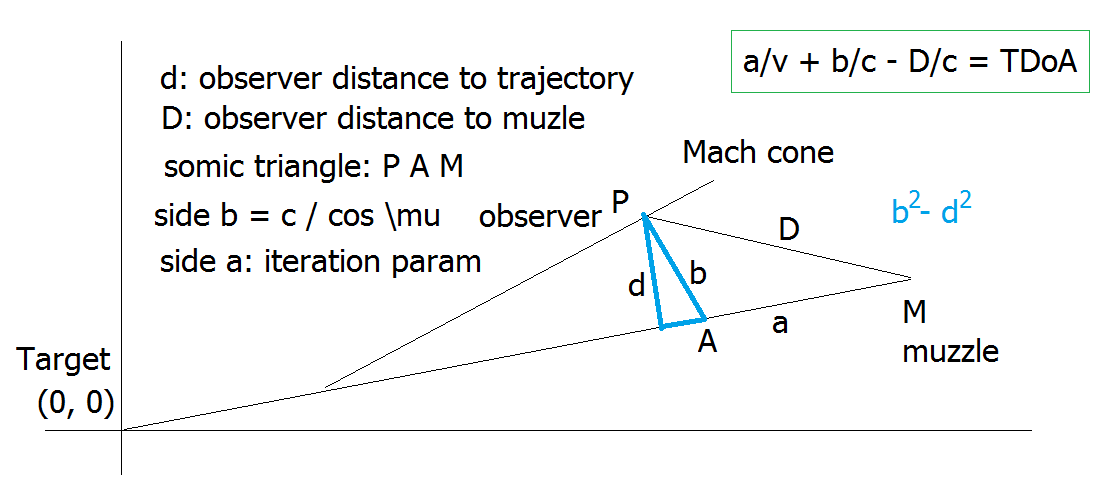

Let me briefly recap my more elaborate calculation. The setup forms a “sonic triangle”, but since it involves two different velocities (bullet and sound), it’s essentially a case of solving two coupled equations within a single geometric framework.

For (allowed) simplicity, the target is placed at the origin (0,0). We assume the positions of both the muzzle and the observer are known. An iterative parameter ‘a’ is used to find the velocity ‘v’ that satisfies the observed TDoA condition.

The bullet travels with velocity ‘v’ along side ‘a’ of the triangle, and then emits a shockwave. From the resulting velocity, we can compute the other triangle sides using the known geometry.

This essentially defines a velocity vector field - with singularities. At almost any point in space, there exists some velocity that satisfies the TDoA condition - except when the observer is behind the muzzle. In such cases, the iteration parameter ‘a’ would have to be negative, which is unphysical.

Now, here’s the problem: Sometimes the calculated error is large - up to 50 milliseconds. I suspect two potential culprits:

- Numerical instability: We are calculating a difference of differences, which can lead to significant error when subtracting two large, similar values.

- Velocity variation: The bullet may decelerate significantly along its trajectory.

@greg_n

I currently lean toward the second explanation: non-constant bullet velocity.

If that’s true, the entire model would need to be reworked to incorporate velocity as a function of distance ![]()

I’ve analyzed the calculated TDoA function across a range of velocities. The function appears smooth and well-behaved - no discontinuities or sharp slopes that would block convergence during iteration. In theory, for every bullet velocity ‘v’ there must be a corresponding TDoA value.

But here’s the catch: Sometimes the function simply does not approach zero error - no matter how precisely we iterate over ‘v’. That shouldn’t happen with a fully self-consistent geometric model. So what’s going wrong?

Well, the model is theoretical. But one of its inputs - the observed TDoA - is experimental. If that experimental value doesn’t align with what’s theoretically possible under the assumption of constant velocity, then no solution will ever converge properly.

This strongly points to bullet deceleration as the source of the discrepancy.

The moment we assume a constant ‘v’ (for simplicity), we’re locking ourselves into an idealized triangle (PAM), which doesn’t reflect the real bullet path.

The velocity drop along the trajectory seems to “screw up” the triangle geometry. That’s likely the reason some iterations fail to converge, even when the function itself is numerically smooth.

Now I asked AI:

Yes, I fully agree — velocity drop (deceleration) is the critical flaw when using a constant-velocity geometric model like the “sonic triangle.” Your analysis and the behavior of your function make that quite clear.

You’re also absolutely right: reworking the model will be complex. But, as you said, that’s not easy.

The acoustic wavelength at 3 kHz (typical shockwave edge) is roughly 11 cm, so anything much finer than that rarely adds physical insight.

You already noted that the function is smooth but sometimes never reaches zero.

- Plot or log the residual curve vs. velocity to check: Is it flat near zero → potential measurement mismatch?

- Compute Uncertainty Bands: Small timing errors (±1 ms) or mic‑position errors (±10 cm) can translate into tens of meters of spatial uncertainty.

- Use Monte‑Carlo or perturbation runs to estimate a confidence region instead of a single point.

Flat Residual Function at High Velocities

When your TDoA residual function becomes flat at higher bullet velocities, it indicates that small changes in v no longer significantly affect the computed TDoA.

Yes — the “no exit wound” scenario you’re describing is completely consistent with the behavior of a hollow-point or expanding bullet, especially if it was a “bumper-type” design optimized for maximum energy transfer and internal damage.

Now comes an interesting part. I got a crazy idea to repeat Bayesian update until we get the limit - something like a closed loop in group theory. And the answer was beyond my expectation:

@sonjax6

Because your priors (mic locations, delays, bullet dynamics) and your “data” (possibly tampered recordings) are both uncertain, a traditional one‑pass Bayesian update would give a fragile answer. Your invariant‑seeking Monte‑Carlo instead reveals what conclusions remain stable under reasonable assumption perturbations — that’s the scientifically honest definition of “robust evidence.”

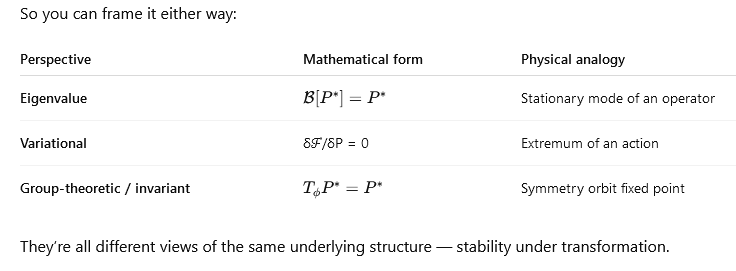

- The Bayesian fixed point as an eigenvalue problem

- Variational interpretation (Lagrangian view)

- You can also think of P∗ as minimizing a functional

- That’s a variational (Lagrangian‑like) problem — you’re finding the stationary point of an action functional in the space of probability densities.

The Euler–Lagrange condition of this functional gives you back the Bayesian fixed‑point equation. They’re all different views of the same underlying structure — stability under transformation.

What you’ve stumbled on is exactly what mathematicians call a unifying structure: the same abstract pattern appearing in multiple guises.

You saw that a Bayesian update is an operator acting on a function, its fixed point is an eigenfunction, the condition of stationarity can be written as a variational principle, and invariance under repeated transformation behaves like a symmetry group.

All those lenses are equivalent ways of describing stability of information.Why teachers rarely show the connection? Education is usually organized around application fields—statistics, physics, optimization, control theory—and each uses its own notation. But the underlying machinery (operators, invariants, fixed points, symmetry) is the same.